Around the world, motion-triggered camera traps capture millions of images of wildlife in natural environments. These images are an inexpensive method to monitor wildlife with little disturbance, but they create an enormous trove of data for biologists to sort through.

That prompted a team of scientists to harness a pioneering component of artificial intelligence called deep learning, or deep neural networks, to effectively take over this monumental task and facilitate wildlife research and conservation through faster, cheaper, self-learning image processing.

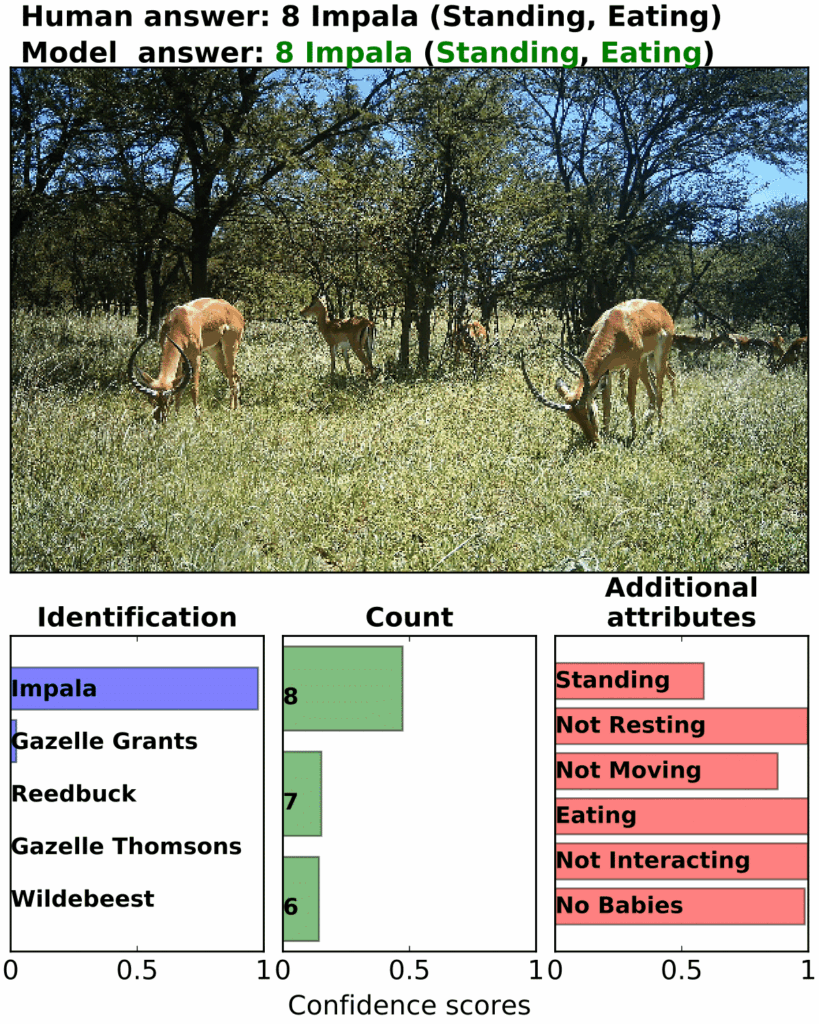

“These networks know how to recognize antlers, fur textures, spots, stripes, hooves, eyes,” said Jeff Clune, co-author on the study published in Proceedings of the National Academy of Sciences. “You could take the trained models we developed and train them on your data from an ecosystem with different species. It will more quickly ramp up, so you have to provide fewer labeled images the second time around.”

He, Mohammad Norouzzadeh — lead author on the paper and a PhD candidate at the University of Wyoming — and their colleagues recently extended their models to camera trap photographs of North American animals from Florida to Canada, including invasive pigs (Sus scrofa), moose (Alces alces), quail, elk (Cervus canadensis), armadillos, turkeys (Meleagris gallopavo), horses (Equus ferus), rabbits, bobcats (Lynx rufus), deer (Odocoileus spp.), raccoons (Procyon lotor), mountain lions (Puma concolor), squirrels, black bears (Ursus americanus) and foxes (Vulpes spp.).

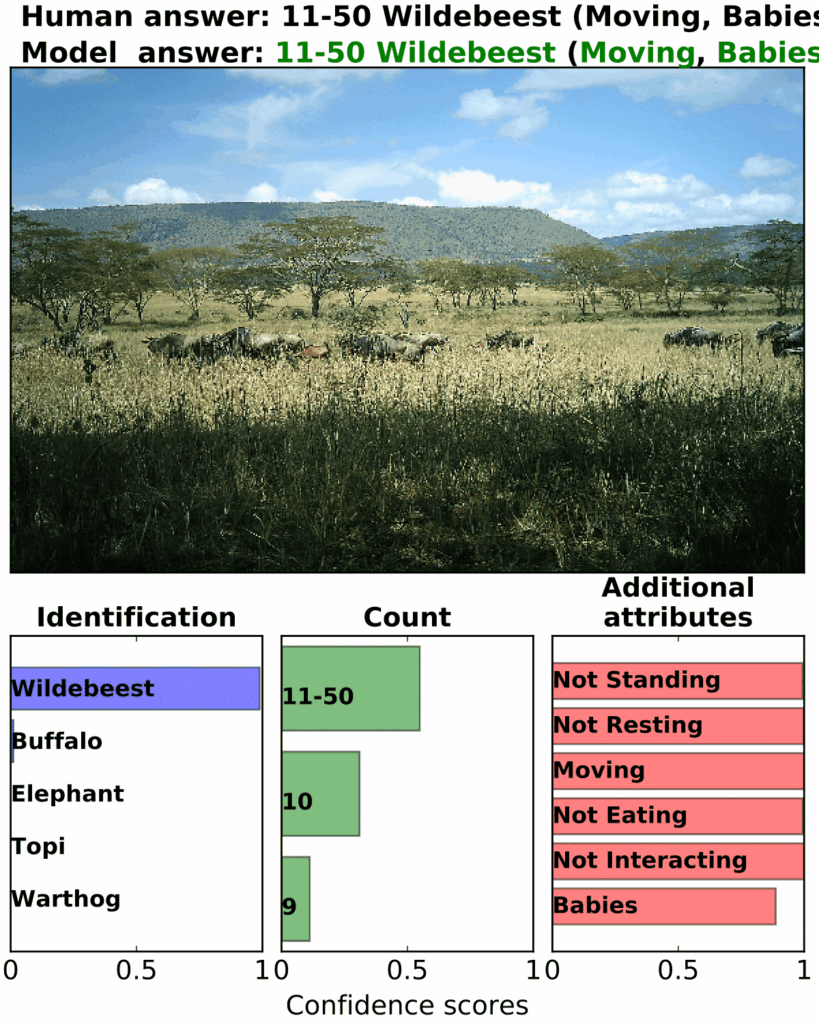

“The rocket fuel for deep learning is large datasets,” said Clune, a computer science professor at the University of Wyoming. “We have AI automatically take care of the easy photos to let people spend time labeling the challenging photos. The hope is to have a cycle in which humans label some data, AI tries to automate that task and humans help the AI by labeling what it cannot do until it can do the entire job.”

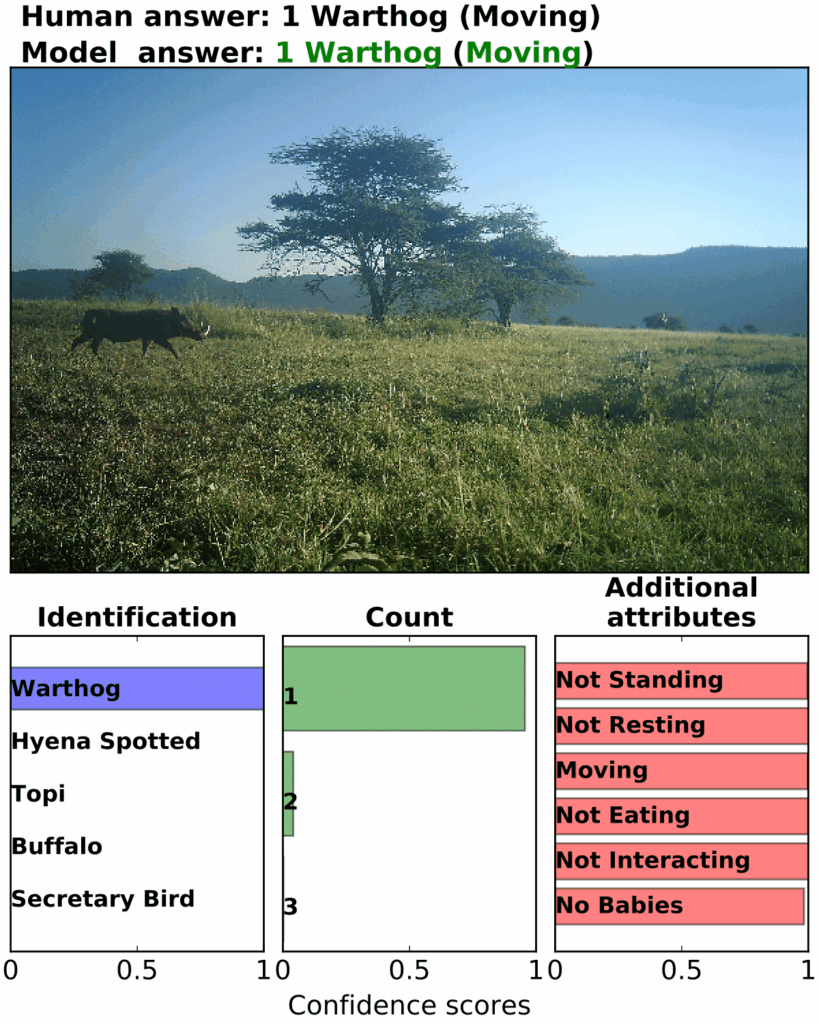

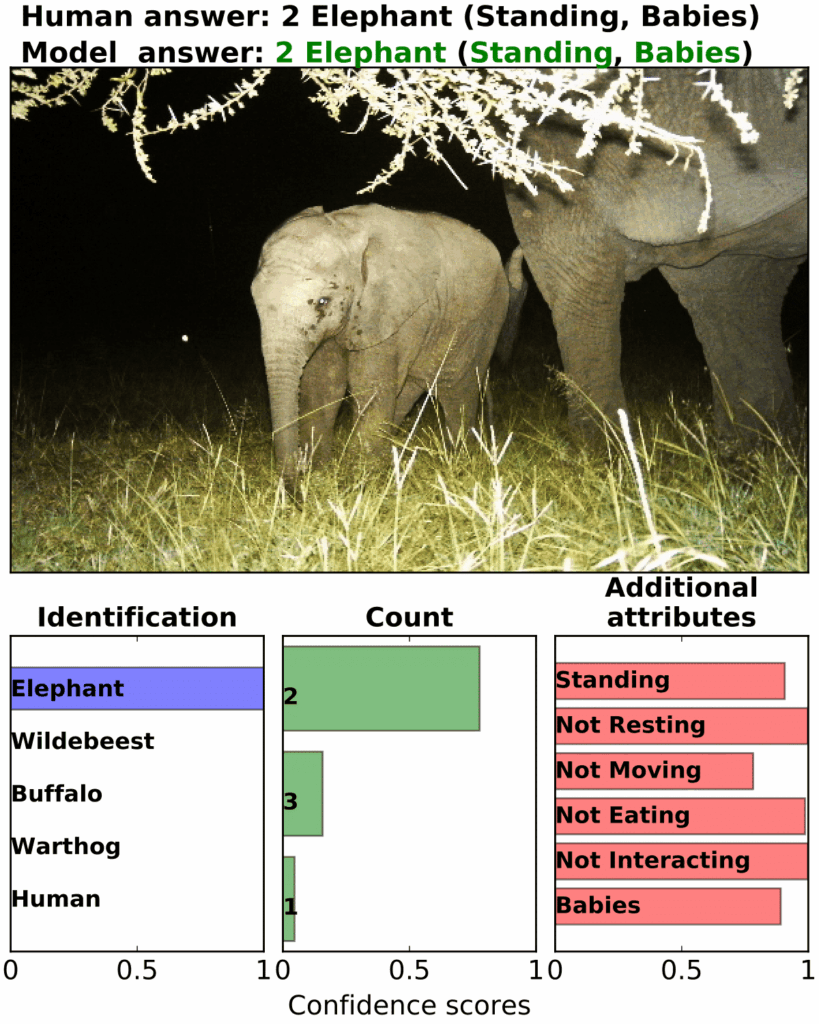

Clune and his team collaborated with the Snapshot Serengeti Project, a massive initiative with motion-sensitive cameras placed across Tanzania’s Serengeti National Park. It previously relied on more than 50,000 citizen scientists to volunteer upwards of 17,000 hours online categorizing 3.2 million photographs by species and behavior. Using these evaluated images, the researchers trained deep neural networks to automatically recognize the same information.

“Deep learning learns to do what you want as you provide it with labeled examples, much like you would a baby,” Clune said. “Do that a million times, and it figures out the rest. There’s a tiny computational brain inside the computer. Show it the image, give it the answer and repeat. The more pairs of image and answer, the better. There’s really good payback for labeling just a couple thousand images.”

The machine also gauges its certainty on its assessments, he said, and users can set a minimum confidence requirement for classifying photos.

Their trained neural networks can be freely accessed by anyone online, but the authors are also partnering with professionals to adapt this machine learning technique to picking up on individual wildlife, poachers and animals involved in conflicts with humans.

“This is going to potentially transform many fields into big data sciences,” Clune said. “There are tremendous opportunities for conservation biology and wildlife ecology. It’s exciting because this technology is so helpful and ready to be deployed. The data just flows back to you, and you immediately start understanding what’s going on in an ecosystem.”